Kubernetes supports many types of volumes. A Pod can use any number of volume types simultaneously. Ephemeral volume types have a lifetime of a pod, but persistent volumes exist beyond the lifetime of a pod. When a pod ceases to exist, Kubernetes destroys ephemeral volumes; however, Kubernetes does not destroy persistent volumes.

At its core, a volume is a directory, possibly with some data in it, which is accessible to the containers in a pod. How that directory comes to be, the medium that backs it, and the contents of it are determined by the particular volume type used.

Types of Persistent Volumes

PersistentVolume types are implemented as plugins. Kubernetes currently supports the following plugins:

- csi – Container Storage Interface (CSI)

- fc – Fibre Channel (FC) storage

- hostPath – HostPath volume (for single node testing only; WILL NOT WORK in a multi-node cluster; consider using local volume instead)

- iscsi – iSCSI (SCSI over IP) storage

- local – local storage devices mounted on nodes.

- nfs – Network File System (NFS) storage

Access Modes

A PersistentVolume can be mounted on a host in any way supported by the resource provider. As shown in the table below, providers will have different capabilities and each PV’s access modes are set to the specific modes supported by that particular volume. For example, NFS can support multiple read/write clients, but a specific NFS PV might be exported on the server as read-only. Each PV gets its own set of access modes describing that specific PV’s capabilities.

The access modes are:

- ReadWriteOnce

the volume can be mounted as read-write by a single node. ReadWriteOnce access mode still can allow multiple pods to access the volume when the pods are running on the same node.

- ReadOnlyMany

the volume can be mounted as read-only by many nodes.

- ReadWriteMany

the volume can be mounted as read-write by many nodes.

- ReadWriteOncePod

FEATURE STATE: Kubernetes v1.27 [beta]

the volume can be mounted as read-write by a single Pod. Use ReadWriteOncePod access mode if you want to ensure that only one pod across the whole cluster can read that PVC or write to it. This is only supported for CSI volumes and Kubernetes version 1.22+.

In the CLI, the access modes are abbreviated to:

- RWO – ReadWriteOnce

- ROX – ReadOnlyMany

- RWX – ReadWriteMany

- RWOP – ReadWriteOncePod

| Volume Plugin | ReadWriteOnce | ReadOnlyMany | ReadWriteMany | ReadWriteOncePod |

| AzureFile | ✓ | ✓ | ✓ | – |

| CephFS | ✓ | ✓ | ✓ | – |

| CSI | depends on the driver | depends on the driver | depends on the driver | depends on the driver |

| FC | ✓ | ✓ | – | – |

| FlexVolume | ✓ | ✓ | depends on the driver | – |

| GCEPersistentDisk | ✓ | ✓ | – | – |

| Glusterfs | ✓ | ✓ | ✓ | – |

| HostPath | ✓ | – | – | – |

| iSCSI | ✓ | ✓ | – | – |

| NFS | ✓ | ✓ | ✓ | – |

| RBD | ✓ | ✓ | – | – |

| VsphereVolume | ✓ | – | – (works when Pods are collocated) | – |

| PortworxVolume | ✓ | – | ✓ | – |

Storge Class

A PV can have a class, which is specified by setting the storageClassName attribute to the name of a StorageClass. A PV of a particular class can only be bound to PVCs requesting that class. A PV with no storageClassName has no class and can only be bound to PVCs that request no particular class.

Kubernetes StorageClass provides a way for administrators to describe the “classes” of storage they offer. Different classes might map to quality-of-service levels, to backup policies, or to arbitrary policies determined by the cluster administrators.

Each StorageClass has a provisioner that determines what volume plugin is used for provisioning PVs.

Kubernetes doesn’t include an internal NFS provisioner. You need to use an external provisioner to create a StorageClass for NFS.

NFS subdir external provisioner

NFS subdir external provisioner is an automatic provisioner that uses your existing and already configured NFS server to support dynamic provisioning of Kubernetes Persistent Volumes via Persistent Volume Claims. Persistent volumes are provisioned as ${namespace}-${pvcName}-${pvName}.

An NFS storage provisioner is sufficient for development environments and workloads with low I/O performance requirements.

Deployment With Helm:

This chart installs a custom storage class into a Kubernetes cluster using the Helm package manager. It also installs an NFS client provisioner into the cluster which dynamically creates persistent volumes from a single NFS share.

$ helm repo add nfs-subdir-external-provisioner https://kubernetes-sigs.github.io/nfs-subdir-external-provisioner/

$ helm install nfs-subdir-external-provisioner nfs-subdir-external-provisioner/nfs-subdir-external-provisioner –create-namespace -n nfs-provisioner –set nfs.server=x.x.x.x –set nfs.path=/exports –set storageClass.name=nfs-client –set storageClass.onDelete=delete

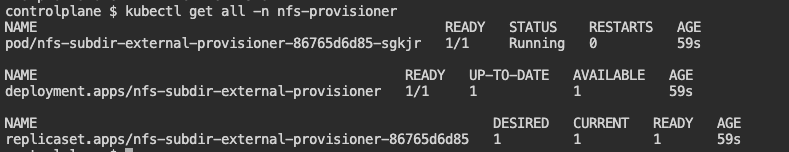

Verification

$ kubectl get all -n nfs-provisioner

$ kubectl get sc

$ kubectl describe sc nfs-client

Test NFS subdir external provisioner.

$ Kubectl apply -f https://raw.githubusercontent.com/kubernetes-sigs/nfs-subdir-external-provisioner/master/deploy/test-claim.yaml

$ kubectl apply -f https://raw.githubusercontent.com/kubernetes-sigs/nfs-subdir-external-provisioner/master/deploy/test-pod.yaml

$ Kubectl get pvc

$ kubectl exec nfs-server-6b4cbd849c-8p6c8 — ls /exports/default-test-claim-pvc-324a066b-0c40-436e-a9c8-0cbc47a2dda3

NFS provisioner limitations/pitfalls:

- The provisioned storage is not guaranteed. You may allocate more than the NFS share’s total size. The share may also not have enough storage space left to actually accommodate the request.

- The provisioned storage limit is not enforced. The application can expand to use all the available storage regardless of the provisioned size.

- Storage resize/expansion operations are not presently supported in any form.

Configuration

The following tables list the configurable parameters of the Helm chart and their default values.

| Parameter | Description | Default |

|---|---|---|

replicaCount | Number of provisioner instances to deployed | 1 |

strategyType | Specifies the strategy used to replace old Pods by new ones | Recreate |

image.repository | Provisioner image | registry.k8s.io/sig-storage/nfs-subdir-external-provisioner |

image.tag | Version of provisioner image | v4.0.2 |

image.pullPolicy | Image pull policy | IfNotPresent |

imagePullSecrets | Image pull secrets | [] |

storageClass.name | Name of the storageClass | nfs-client |

storageClass.defaultClass | Set as the default StorageClass | false |

storageClass.allowVolumeExpansion | Allow expanding the volume | true |

storageClass.reclaimPolicy | Method used to reclaim an obsoleted volume | Delete |

storageClass.provisionerName | Name of the provisionerName | null |

storageClass.archiveOnDelete | Archive PVC when deleting | true |

storageClass.onDelete | Strategy on PVC deletion. Overrides archiveOnDelete when set to lowercase values ‘delete’ or ‘retain’ | null |

storageClass.pathPattern | Specifies a template for the directory name | null |

storageClass.accessModes | Set access mode for PV | ReadWriteOnce |

storageClass.volumeBindingMode | Set volume binding mode for Storage Class | Immediate |

storageClass.annotations | Set additional annotations for the StorageClass | {} |

leaderElection.enabled | Enables or disables leader election | true |

nfs.server | Hostname of the NFS server (required) | null (ip or hostname) |

nfs.path | Basepath of the mount point to be used | /nfs-storage |

nfs.mountOptions | Mount options (e.g. ‘nfsvers=3’) | null |

nfs.volumeName | Volume name used inside the pods | nfs-subdir-external-provisioner-root |

nfs.reclaimPolicy | Reclaim policy for the main nfs volume used for subdir provisioning | Retain |

resources | Resources required (e.g. CPU, memory) | {} |

rbac.create | Use Role-based Access Control | true |

podSecurityPolicy.enabled | Create & use Pod Security Policy resources | false |

podAnnotations | Additional annotations for the Pods | {} |

priorityClassName | Set pod priorityClassName | null |

serviceAccount.create | Should we create a ServiceAccount | true |

serviceAccount.name | Name of the ServiceAccount to use | null |

serviceAccount.annotations | Additional annotations for the ServiceAccount | {} |

nodeSelector | Node labels for pod assignment | {} |

affinity | Affinity settings | {} |

tolerations | List of node taints to tolerate | [] |

labels | Additional labels for any resource created | {} |

podDisruptionBudget.enabled | Create and use Pod Disruption Budget | false |

podDisruptionBudget.maxUnavailable | Set maximum unavailable pods in the Pod Disruption Budget | 1 |

/

References: